Live pairing burns engineering hours.

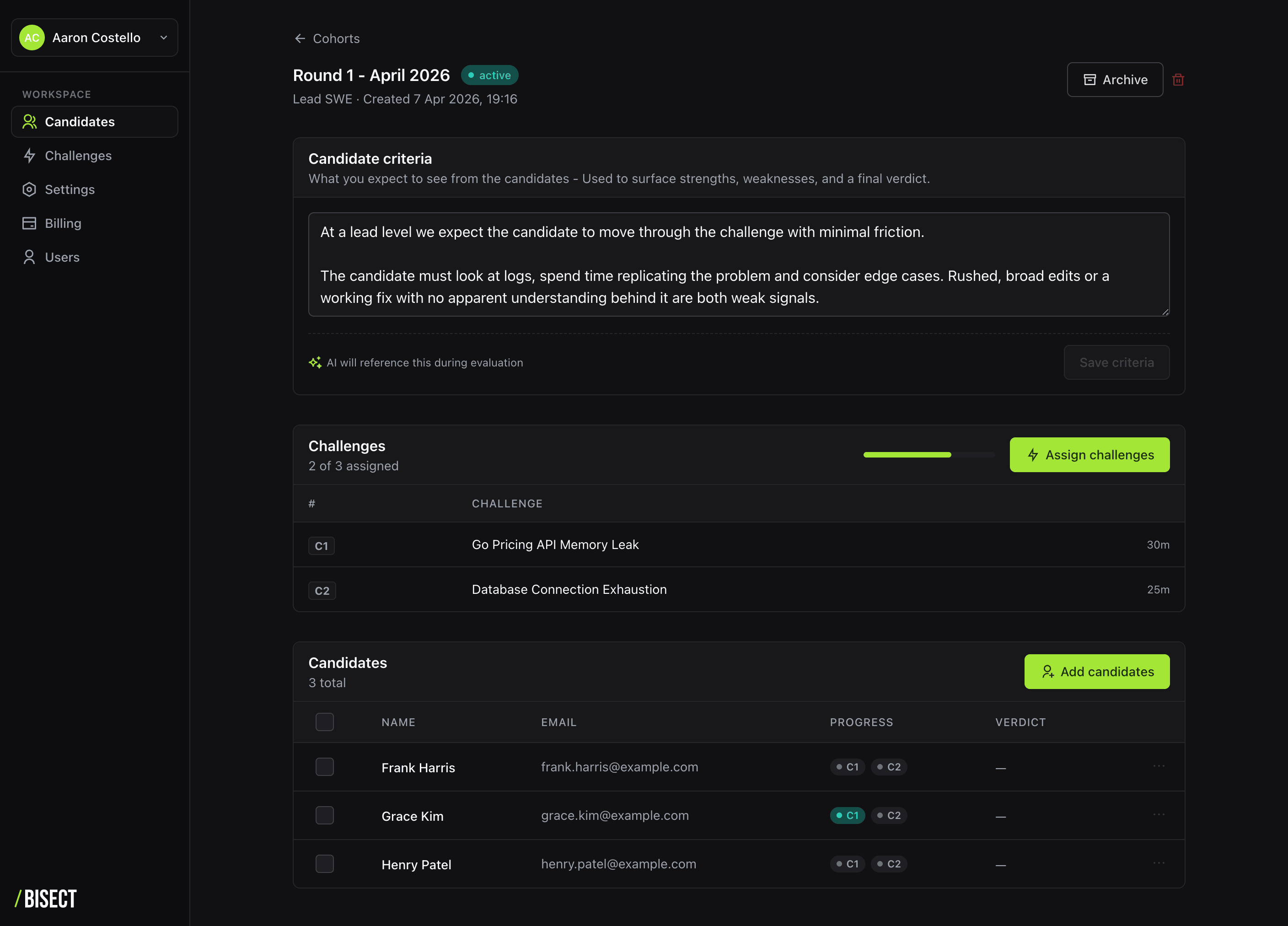

Forty five minutes per candidate, per interviewer, with inconsistent signal. Senior engineers carry the load and burn out on it.

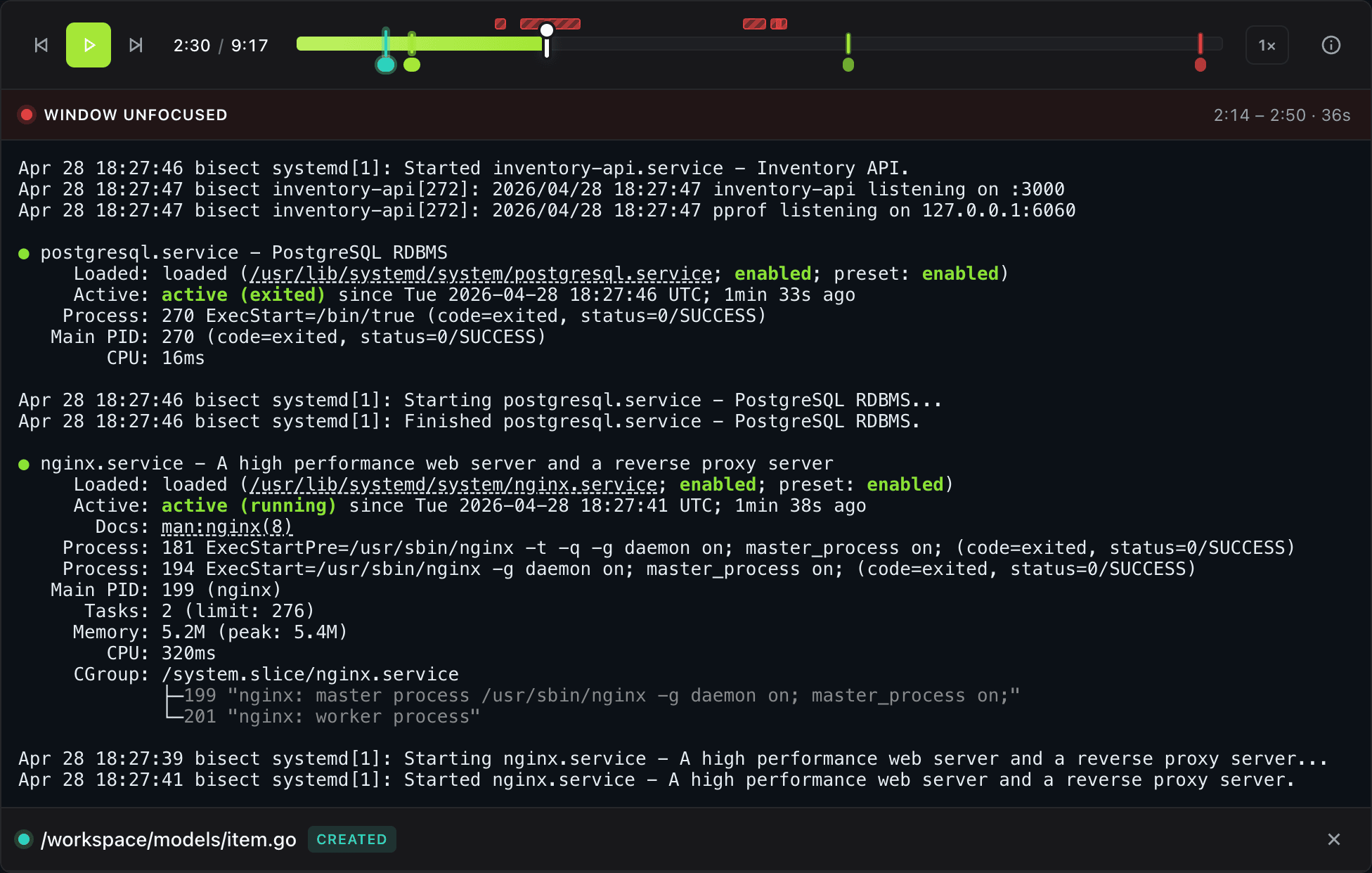

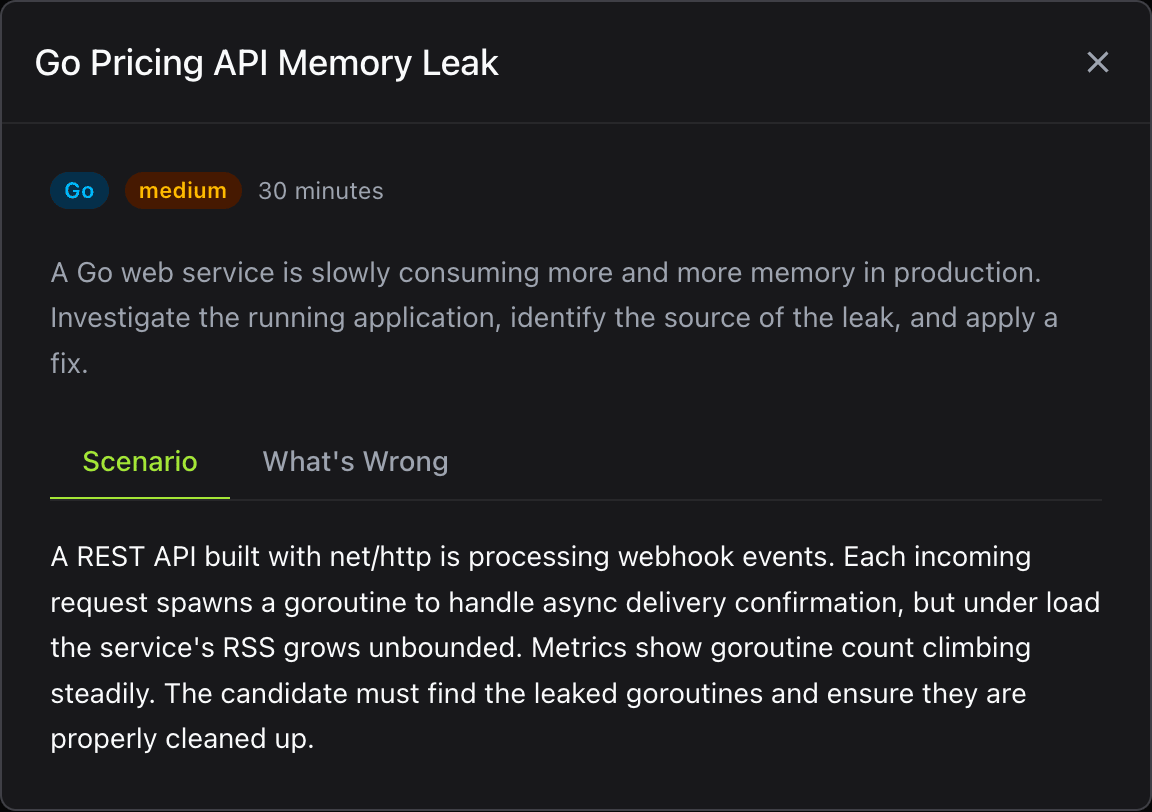

Async sessions. Review a recorded replay in five minutes, with event markers, a session summary and a weak / acceptable / neutral / strong verdict.